PRECISION

PROCESS CONTROL MEASUREMENT

We build rugged, real-time, non-nuclear process control systems that empower engineers, protect processes, and reduce downtime. From data to decisions — we put control in your hands.

TRUSTED BY ENGINEERS

PROVEN IN

HARSH CONDITIONS.

Built for mining, dredging, oil & gas, and beyond.

Installed inline, built to last, and powered by real-time data, they’re delivering results where legacy systems fall short.

DATA ANYTIME ANYWHERE.

Know What’s Moving Through Your Pipeline.

Anytime. Anywhere.

The Ruby™ software app gives you real-time insight into your system, wherever you are. Monitor key metrics, track performance, and stay connected - remotely and instantly.

Do you know what’s happening in your pipeline?

With Rugged Ruby 2.0, now you can.

SMARTER INFRASTRUCTURE STARTS WITH

SMARTER DATA.

From compliance to optimization, your team needs tools that evolve with your process.

Red Meters are upgradable, cloud-enabled, and engineered for long-term process control performance, no matter the challenge.

SMARTER TECH

STRONGER OPERATIONS.

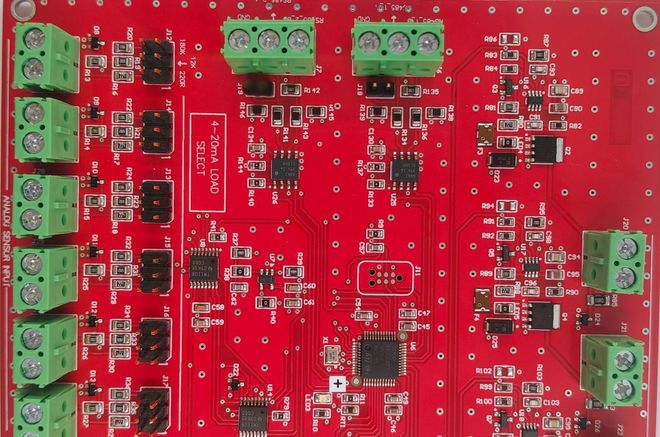

Red Meters combines precision engineering with AI-powered analytics to transform how density and flow are measured.

No radiation. No outdated gauges. Just real-time insight from the world’s first patented inline deflection system — Red Meters Rugged Process Control Series powered by Ruby™ 2.0.

Delivering

Real-Time,

Non-Nuclear

Density Process Control Meters for

Slurry & Dry Bulk

Engineered to reduce downtime,

cut maintenance,

& improve process visibility.

No fluff.

No hype.

Just results.

LIVE FROM

THE MINEFACE.

LEARN MORE.

Have a question? We’re here to help.

Send us a message and we’ll get be in touch.